HTTP Heuristic Caching (Missing Cache-Control and Expires Headers) Explained

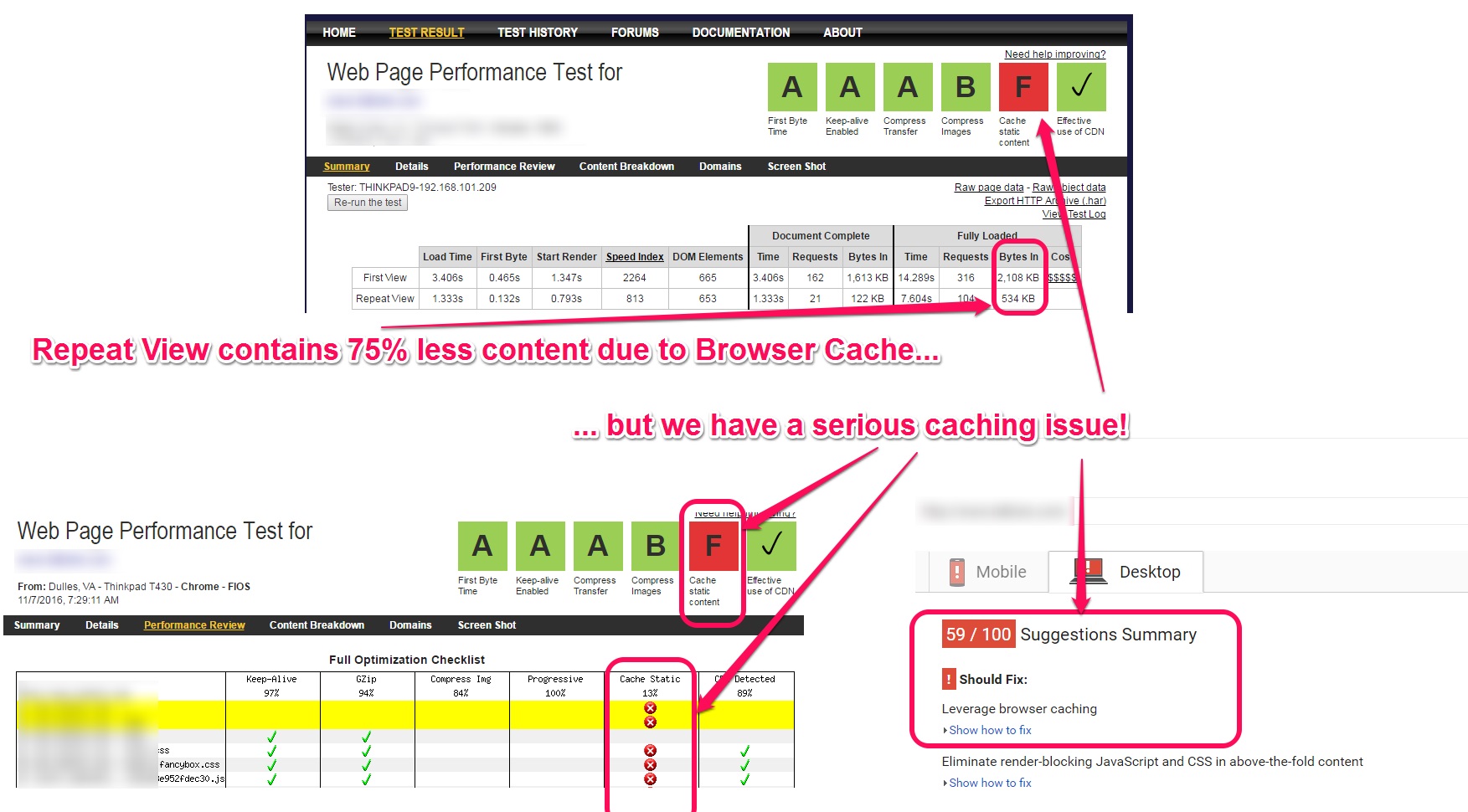

Have you ever wondered why WebPageTest can sometimes show that a repeat view loaded with less bytes downloaded, while also triggering warnings related to browser caching? It can seem like the test is reporting an issue that does not exist, but in fact it’s often a sign of a more serious issue that should be investigated. Often the issue is not the lack of caching, but rather lack of control over how your content is cached.

If you have not run into this issue before, then examine the screenshot below to see an example:

The repeat view shows that we loaded resources from the browser cache, and that seems like a desirable outcome. However the results are flagging a cache issue. And the site received a poor PageSpeed score for “Caching Static Content”.

Before we dig into the reasons behind this, let’s step back and review some fundamentals…

HTTP Caching Simplified

For an HTTP client to cache a resource, it needs to understand 2 pieces of information:

- “How long am I allowed to cache this for?”

- “How do I validate that the content is still fresh?”

RFC 7234 covers this in section 4.2 (Freshness) and 4.3 (Validation).

The HTTP Response headers that are typically used for conveying freshness lifetime are :

- Cache-Control (max-age provides a cache lifetime duration)

- Expires (provides an expiration date, Cache-Control max-age takes priority if both are present)

The HTTP response headers for validating the responses stored within the cache, i.e. giving conditional requests something to compare to on the server side, are:

- Last-Modified (contains the date-time since the object was last modified)

- Etag (provides a unique identifier for the content)

The Cache-Control max-age and Expires headers satisfy the job of setting freshness lifetimes (ie, TTLs) as well as provide a time based validation. You can think of them as saying “this resource is valid for x seconds” or “cache this resource until <date/time>”. When both are present in a response, the browser will prioritize the Cache-Control over the Expires header. When the expiration passes, then the browser can send a conditional request using an If-Modified-Since header to ask if the object has been modified (since it’s Last-Modified-Date or from the last resource version the browser received). If neither a Cache-Control or Expires header is present, then the specification allows the browser to heuristically assign it’s own freshness time. It suggests that this heuristic can be based on the Last-Modified-Date.

Etags are useful for providing unique identifiers to a cached item, but they do not specify cache lifetimes. They simply provide a way of validating the resource via a If-None-Match conditional request.

Example

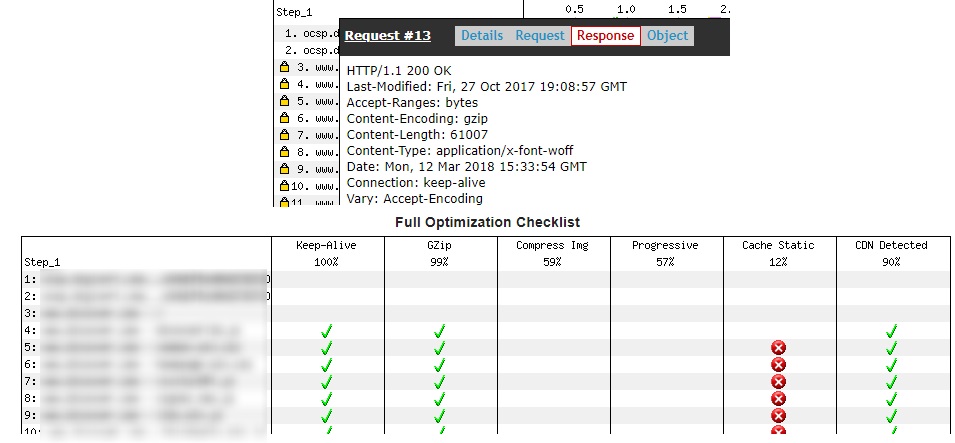

If we examine a request where this is happening you can see that the site is sending a Last-Modified header, but there are no Cache-Control or Expires headers. The absence of these two headers is why WebPageTest has flagged this as an issue with caching static content.

This object was last modified on October 27th, 2017 but The browser does not know how long to cache it for, which puts us in the unpredictable realm of “Heuristic Freshness”. This can be simplified as “So this is cacheable, but you won’t tell me for how long? I’ll have to guess a TTL and use that”.

This is not to say that there is anything wrong with heuristic freshness. It was originally defined in the HTTP specification so that caches could still add some value in situations where the origin did not set a cache policy, while still being safe. However, it is a loss of control over browser cacheability. The HTTP specification encourages caches to use a heuristic expiration value that is no more than some fraction of the interval since the Last-Modified time. A typical setting of this fraction might be 10%.

How Long Will Browsers Actually Heuristically Cache Content For?

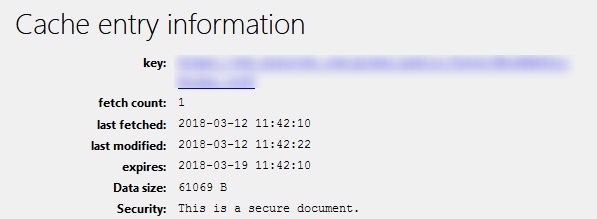

So we know that there are problems with this request, and we also know that the cache TTLs may be assigned heuristically based on the Last-Modified date – but why does Firefox’s about:cache show that this resource is cacheable for 1 week instead of 10% of 136 days?

Chrome’s cache internals do not show the expiration for a cached resource, so it’s expiration is not easy to determine. Is it 1 week as well? Or 10% of 136 days? Or something else?

Since Firefox, Chrome and WebKit are open source, we can actually dig into the code and see how the spec is implemented. In the Chromium source as well as the Webkit source, we can see that the lifetimes.freshness variable is set to 10% of the time since the object was last modified (provided that a Last-Modified header was returned). The 10% since last modified heuristic is the same as the suggestion from the RFC. When we look at the Firefox source code, we can see that the same calculation is used, however Firefox uses the std:min() C++ function to select the less of the 10% calculation or 1 week. This explains why we only saw the resource cached for 7 days in Firefox. It would likely have been cached for 13 or 14 days in Chrome.

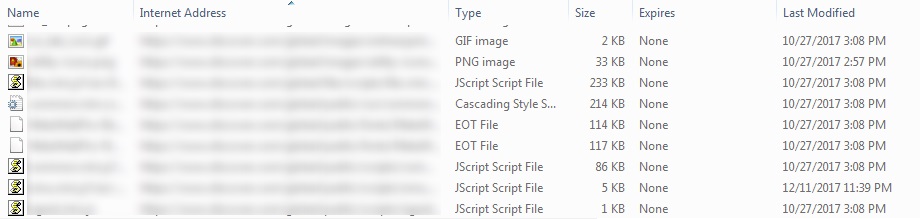

With Internet Explorer 11, we can look at the Temporary Internet Files to see how the browser handles heuristic freshness. This is similar to the about:cache investigation we did for Firefox, but it’s the only visibility we have to work with. To do this, clear your browser cache, then open a page where objects are being heuristically cached. Then view the files in C:\Users\<username>\AppData\Local\Microsoft\Windows\Temporary Internet Files. The screenshot below shows that there is no expiration for the resources cached, although it’s not clear whether a heuristic is used.

This appears to have been configurable in earlier versions of IE. I’m not sure how heuristic caching is implemented in Edge yet – but will update this when I find out.

Summary

Many content owners incorrectly believe that omitting Cache-Control and Expires headers will prevent downstream caching. In fact, the opposite is true. A worst case scenario of this type of issue would be to combine heuristic caching with infrequent updates to non-versioned CSS/JS objects. Such a scenario would result in page breakage for some clients, while being extremely difficult to reproduce and troubleshoot across different browsers. Simply setting the proper cache headers easily avoids this – and it’s simple to fix, either on your web servers or within your CDN configuration.

If you want to ensure that freshness lifetimes are explicitly stated in your HTTP responses, the best way to do this would be to always include a Cache-Control header. At a minimum, you should be including no-store to prevent caching or max-age=<seconds> to indicate how long content should be considered fresh.

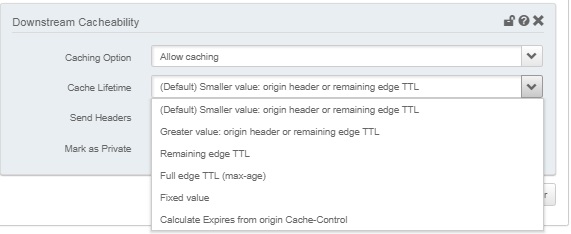

If you are an Akamai customer, it’s easy to create a downstream caching behavior that will automatically update your Cache-Control and Expires headers based on either a static time value or the remaining freshness of the object on the CDN.

Many thanks to Yoav Weiss and Mark Nottingham for reviewing this and providing feedback.